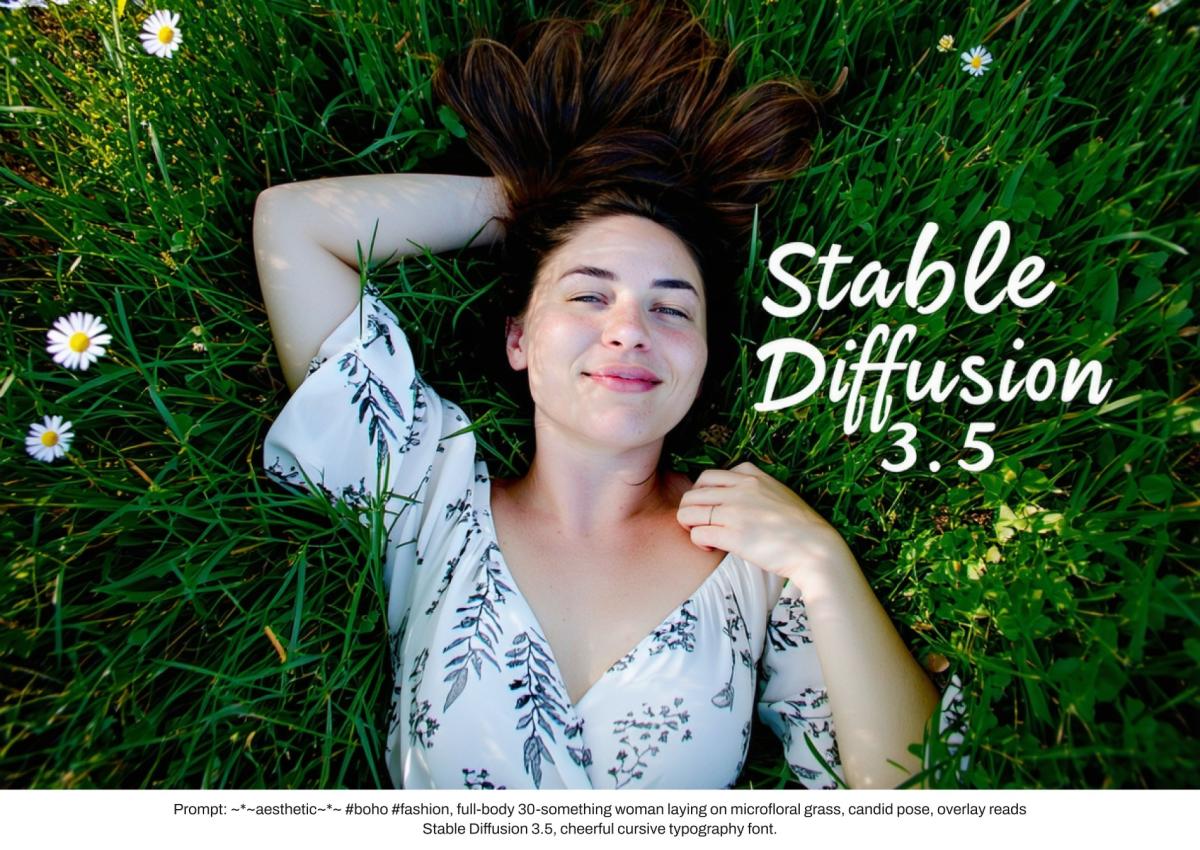

Steady Diffusion, an open-source various to AI picture turbines like Midjourney and DALL-E, has been up to date to model 3.5. The brand new mannequin tries to proper among the wrongs (which can be an understatement) of the extensively panned Steady Diffusion 3 Medium. Stability AI says the three.5 mannequin adheres to prompts higher than different picture turbines and competes with a lot bigger fashions in output high quality. As well as, it’s tuned for a better variety of types, pores and skin tones and options with no need to be prompted to take action explicitly.

The brand new mannequin is available in three flavors. Steady Diffusion 3.5 Giant is probably the most highly effective of the trio, with the very best high quality of the bunch, whereas main the trade in immediate adherence. Stability AI says the mannequin is appropriate for skilled makes use of at 1 MP decision.

In the meantime, Steady Diffusion 3.5 Giant Turbo is a “distilled” model of the bigger mannequin, focusing extra on effectivity than most high quality. Stability AI says the Turbo variant nonetheless produces “high-quality pictures with distinctive immediate adherence” in 4 steps.

Lastly, Steady Diffusion 3.5 Medium (2.5 billion parameters) is designed to run on client {hardware}, balancing high quality with simplicity. With its better ease of customization, the mannequin can generate pictures between 0.25 and a couple of megapixel decision. Nonetheless, in contrast to the primary two fashions, which can be found now, Steady Diffusion 3.5 Medium doesn’t arrive till October 29.

The brand new trio follows the botched Steady Diffusion 3 Medium in June. The corporate admitted that the discharge “didn’t totally meet our requirements or our communities’ expectations,” because it produced some laughably grotesque physique horror in response to prompts that requested for no such factor. Stability AI’s repeated mentions of outstanding immediate adherence in immediately’s announcement are probably no coincidence.

Though Stability AI solely briefly talked about it in its announcement weblog put up, the three.5 sequence has new filters to raised mirror human variety. The corporate describes the brand new fashions’ human outputs as “consultant of the world, not only one sort of individual, with completely different pores and skin tones and options, with out the necessity for in depth prompting.”

Let’s hope it’s refined sufficient to account for subtleties and historic sensitivities, in contrast to Google’s debacle from earlier this 12 months. Unprompted to take action, Gemini produced collections of egregiously inaccurate historic “photographs,” like ethnically numerous Nazis and US Founding Fathers. The backlash was so intense that Google didn’t reincorporate human generations till six months later.