X has released its latest Transparency Report, revealing a comprehensive overview of the enforcement actions undertaken during the latter half of 2024. This report highlights the organization’s responses to various rule violations, government requests, and other significant actions aimed at maintaining platform integrity.

While X claims notable improvements in its fight against spam, with reports indicating a 19% decrease compared to the first half of the year, the report unveils several intriguing insights that merit closer examination.

Initially, X provides a detailed breakdown of the total enforcement actions executed in response to identified rule violations from July to December 2024. This data is crucial for understanding the evolving landscape of content moderation and compliance on the platform.

When comparing the current report to X’s previous transparency update, which covered the first half of 2024, several anomalies emerge that warrant attention, particularly in the context of content moderation strategies.

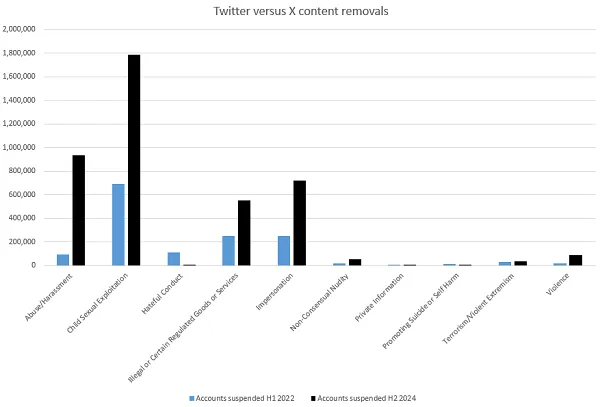

X reported a reduction of 1.16 million posts removed due to “Abuse and Harassment,” a staggering 43% drop compared to the last report. Interestingly, however, the number of account suspensions decreased by only 164,000, reflecting a modest decline of 15%. This disparity indicates a potential shift in X’s approach to handling such reports and raises questions about the effectiveness of current moderation policies.

Moreover, a significant decline in the removal of content related to “Child Sexual Exploitation” was observed, dropping by an alarming 80%. In contrast, account suspensions related to this issue saw a smaller decrease of 35%. This discrepancy points to either a reduction in the frequency of such posts or a possible reassessment of X’s efforts to combat this serious issue, suggesting that more could be done to ensure user safety on the platform.

Child safety has garnered heightened attention from Musk and his team, being a critical focus since his acquisition of the platform. The drastic reduction in removals could imply that users are posting this type of content less frequently, or it may reflect a change in X’s operational priorities. Alternatively, it raises the possibility that X is not enforcing its policies as rigorously as before, which poses significant implications for user safety.

Regardless of the reasons, it’s noteworthy that X is suspending a significantly higher number of accounts related to this issue than Twitter did in its final transparency report before Musk’s acquisition. Back in 2022, Twitter indicated that it had suspended 696,000 accounts in the first half of the year for “Child Sexual Exploitation.” Despite the recent decline in suspensions, X’s current action rate remains more than double that of its predecessor.

Additionally, X suspended 2,300 accounts for “Hateful conduct” during the second half of 2024. In contrast, Twitter reported suspending 111,000 accounts for the same reason in 2022. This highlights a notable difference in enforcement metrics and raises questions about the effectiveness of X’s moderation policies under its new governance.

Although X is suspending fewer accounts for hate speech than Twitter did, the platform has emphasized its commitment to a “freedom of speech, not reach” philosophy. This approach reflects a complex balancing act between maintaining user freedom while ensuring that harmful content is adequately addressed.

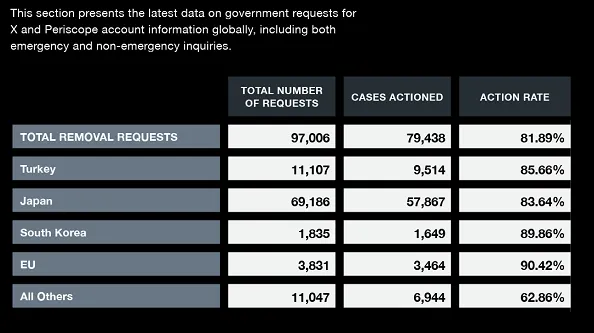

On the topic of legal requests, X has faced a significant number of challenges, receiving 97,000 legal action requests in the first half of the year, with an impressive compliance rate of 82%. This marks a notable increase from the compliance rate of 70% in X’s previous report and showcases a considerable improvement from Twitter’s rate of 54% reported in its H1 2021 data.

This improved compliance rate indicates a stronger commitment to addressing legal obligations, particularly in light of notable actions against Brazilian court orders and removal requests from Australian authorities. X’s overall compliance demonstrates a proactive stance in navigating complex legal landscapes, which is crucial for its operations in various jurisdictions.

It is not surprising that the top countries making legal requests include Turkey, Japan, South Korea, and EU nations. Historically, Turkey, South Korea, and India have been among the most active in requesting removals, and this trend continues to dominate X’s current report.

Questions arise regarding Elon Musk’s business dealings and relationships with these nations, which may influence X’s responsiveness to such requests. Despite Musk’s public assertions advocating for broader freedom of speech, the data reveals that X is actually removing considerably more content in response to government requests compared to Twitter, while also suspending a greater number of users overall.

While the rationale behind these actions may differ, with fewer users facing suspension for hate speech, the overall data suggests that X is adopting a far more restrictive approach to content moderation compared to its predecessor. This shift in strategy may reflect a commitment to ensuring brand safety and user protection, even if it appears less active in certain areas.

The comparative analysis reveals that X is suspending more accounts across all categories, with the exception of hate speech, despite maintaining a similar number of active users. This indicates that even with its current approach of penalizing reach over account restrictions, X is still taking significant action to enforce its policies.

This raises important questions regarding the nature of X’s enforcement strategies. Are these figures indicative of a more rigorous enforcement process, or do they suggest selective punishments in specific areas? If accurate, this data demonstrates X’s commitment to user and brand safety, even if it does not align with the perceptions of its moderation effectiveness in certain domains.

In summary, X finds itself in a challenging position. If it continues to suspend a greater number of users, its much-touted “free speech” approach may come under scrutiny. Conversely, if it fails to act decisively, it risks being seen as permissive under its revised content strategy, leading to potential backlash from users and stakeholders alike.

Ultimately, the data reflects the ongoing struggle that X faces in balancing enforcement actions with the principles of free expression. This challenge is not unique to X, as it mirrors the difficulties encountered by numerous social media platforms in today’s digital landscape.

For more insights, explore X’s latest Transparency Report here.