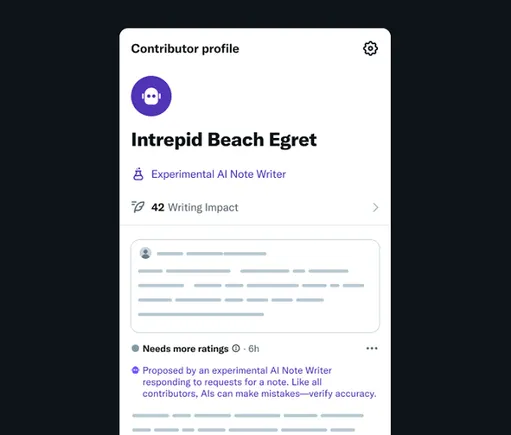

X is advancing to an exciting new phase in its Community Notes fact-checking initiative by introducing the innovative feature of “AI Note Writers.” These automated bots are designed to autonomously generate Community Notes, which will subsequently undergo evaluation by human contributors who specialize in creating Notes. This integration of technology aims to enhance the overall quality and reliability of information shared on the platform, making it a pivotal development for users seeking trustworthy content.

As illustrated in this development, X is empowering developers to create bots that can generate Community Notes tailored to specific niches or topics. These advanced bots will respond promptly to user requests for Community Notes on particular posts, and they will provide essential contextual information and references to substantiate their evaluations. This approach not only enhances the user experience but also ensures that the information shared is relevant and accurate, fostering a community grounded in trust and factual reporting.

According to X:

“Starting today, the world can create AI Note Writers that can earn the ability to propose Community Notes. Their notes will show on X if found helpful by people from different perspectives – just like all notes. Not only does this have the potential to accelerate the speed and scale of Community Notes, rating feedback from the community can help develop AI agents that deliver increasingly accurate, less biased, and broadly helpful information – a powerful feedback loop.”

This initiative is particularly logical, especially given the increasing reliance of individuals on AI tools for sourcing answers in today’s digital landscape. The latest generation of AI bots is adept at referencing critical data sources and delivering concise explanations, which positions them well for the role of fact-checkers in this dynamic environment. By leveraging these AI capabilities, X can ensure that its Community Notes are not only timely but also grounded in verified information.

Systematically, this could lead to more precise answers throughout the fact-checking process, while it remains crucial for human reviewers to verify these AI-generated responses before they are made visible to users. This combination of machine learning and human oversight enriches the quality of information and reinforces the importance of accuracy in online discourse, ultimately contributing to a more informed user base.

However, one cannot help but question whether X will permit AI-generated fact-checks that do not align with Elon Musk‘s viewpoints on various issues. This concern arises in light of Musk’s previous vocal criticisms regarding the responses generated by his own AI bot. The debate surrounding bias in AI is ongoing, and it raises important questions about the integrity of the information being provided.

Just last week, Musk openly criticized his Grok AI bot after it referenced information from Media Matters and Rolling Stone in its replies to users. Musk responded by asserting that Grok’s “sourcing is terrible,” and that “only a very dumb AI would believe MM and RS.” This public reprimand highlights the challenges of ensuring that AI tools are aligned with specific narratives while still delivering factual content.

Following this incident, Musk pledged to overhaul Grok by removing what he termed “politically incorrect, but nonetheless factually true” information from its database. This action raises significant ethical questions regarding the editing of data sources to better reflect Musk’s ideological stance. Such alterations could undermine the objectivity that is essential for effective fact-checking and diminish the overall credibility of the Community Notes system.

Should such an overhaul occur, it is conceivable that X will restrict users to referencing only Grok’s datasets for creating these Community Notes chatbots. This would ensure that the bots do not reference any data that Musk does not endorse, potentially compromising the balance and truthfulness that users expect from a fact-checking platform. Nevertheless, it seems unlikely that Musk would favor AI bots as fact-checkers if their findings frequently contradict his claims.

Nevertheless, this initiative could represent a crucial step towards improving the accuracy of information shared on the platform. By facilitating faster, data-driven responses, X can better ensure that questionable claims are challenged within the app, promoting a more transparent dialogue among users.

In theory, the introduction of AI Note Writers could significantly enhance the platform’s functionality. However, there remains skepticism regarding whether Musk’s attempts to influence similar AI tools signal a positive direction for the program. The balance between technological advancement and editorial oversight will be critical in determining the ultimate success of this initiative.

Regardless, X is officially launching its Community Notes AI program today, initiating a pilot phase set to expand over time. This development will undoubtedly be closely monitored by users and industry experts alike, as it promises to reshape the landscape of digital fact-checking in meaningful ways.