Meta is actively promoting the use of its innovative generative AI image creation tools, seeking to enhance user awareness by integrating greater transparency regarding the usage of AI-generated images. This initiative aims to help users differentiate between genuine and artificially created content.

In essence, this is a significant step forward.

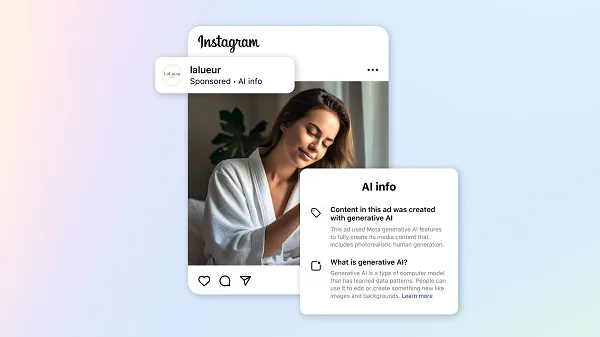

Today, Meta has unveiled its latest enhancements to the disclosure of AI usage in advertisements that incorporate Meta’s proprietary AI tools during their creation process. This move is intended to create a clearer understanding of how AI influences advertising content.

Meta initiated the rollout of these informative labels last year and continues to refine the language associated with these tags to bolster transparency and user comprehension.

According to Meta:

“Our labels are designed to help people understand when images and videos have been created or significantly edited with our in-house generative AI tools. When an image or video is created or significantly edited with our generative AI creative features in our advertiser marketing tools, a label will appear in the three-dot menu or next to the “Sponsored” label.”

As highlighted, Meta is committed to enhancing these labels over time, with the current framework applying solely to advertisements that have undergone “significant edits” using its proprietary AI tools.

“If an advertiser is using our in-house generative AI creative features and these tools do not result in significant edits to the image or video and do not include a photorealistic human, then we will not apply any AI labels.”

While Meta does not specify what constitutes “significant” in this context, the initiative introduces an additional layer of transparency for AI-generated works that originate from Meta.

However, it’s important to note that these regulations only pertain to advertisements, and do not extend to regular posts, which has led to confusion among many Facebook users.

As illustrated, posts like this are generating substantial engagement within the app, with many users appearing to be unaware or unsuspecting of the involvement of AI creation tools.

Additionally, many of these posts are not generated through Meta’s own AI tools, which typically include a distinctive watermark.

Moreover, Meta has indicated that it is also working on a solution for images produced by other AI applications:

“Providing transparency around our home-grown generative AI tools is a first step on our ads generative AI transparency journey. This year, we also plan to share more information on our approach to labeling ad images made or edited with non-Meta generative AI tools. We will continue to evolve our approach to labeling AI-generated content in partnership with experts, advertisers, policy stakeholders and industry partners as people’s expectations and the technology change.”

This initiative could significantly aid in dispelling misinformation and ensuring greater transparency concerning such images.

Of course, Meta is primarily addressing paid promotions in this discussion, and it remains unclear whether similar criteria will be applied to standard posts. However, it is hoped that Meta is exploring solutions that will foster greater transparency across all types of content.

The advent of generative AI tools has led to significant confusion, but perhaps not in the anticipated ways. As the last election cycle approached, the prevailing concern was that AI deepfakes could be manipulated by political factions to influence voter sentiments.

While this has indeed occurred, it has not reached the levels many expected, with a large portion of AI misrepresentation manifesting through low-quality, clickbait images like the example shared above on Facebook, where users seek engagement, potentially intending to sell their Facebook Pages later.

This is less harmful but remains misleading, ultimately diminishing the overall experience on Facebook.

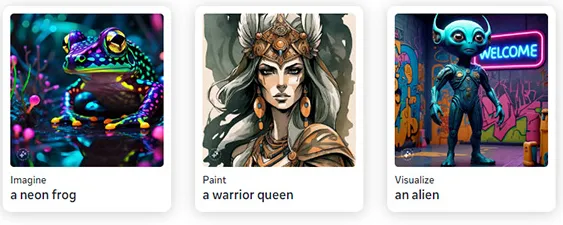

At the same time, Meta is intensifying its focus on promoting its own AI creation tools, as evidenced by prompts like this appearing in user feeds.

While the motivation behind creating such images may be unclear, Meta appears determined to increase user engagement with its Meta AI chatbot, aiming to establish it as the leading AI chatbot globally.

Simultaneously, the company is striving to provide clearer explanations regarding the authenticity of images, as unlabeled AI-generated pictures can be quite misleading.

In fact, Meta could potentially mitigate the impact of such misleading content by refraining from promoting these in-feed prompts altogether. However, the drive to be the best seems to take precedence over potential concerns in this regard.

One of the most perplexing elements of this AI initiative is the long-standing complaints from users regarding the detrimental effects of bots in social media applications, which have undermined the human experience. Yet, Meta is now intent on introducing more bots and artificial content into the ecosystem.

This seems contradictory, appearing more as a demonstration of technological capability rather than addressing what is genuinely valuable. Nevertheless, as long as AI remains the dominant technological trend, it appears that Meta’s focus will continue to center on this area as well.