Meta is embarking on a significant transition to a Community Notes model that will replace third-party fact-checking. This new initiative aims to empower users, allowing them to determine what constitutes false information within Meta’s platforms. The rollout is imminent, with Meta providing users with a comprehensive overview of the workings of Community Notes, emphasizing user control over misinformation detection in its applications.

Taking cues from X’s model, Meta’s Community Notes feature allows users to contribute their own explanations and insights regarding the accuracy of information presented in posts across platforms like Facebook, Instagram, and Threads. However, it’s important to note that this functionality will not extend to advertisements, which can be flagged on X, indicating a more limited scope for Community Notes on Meta’s platforms.

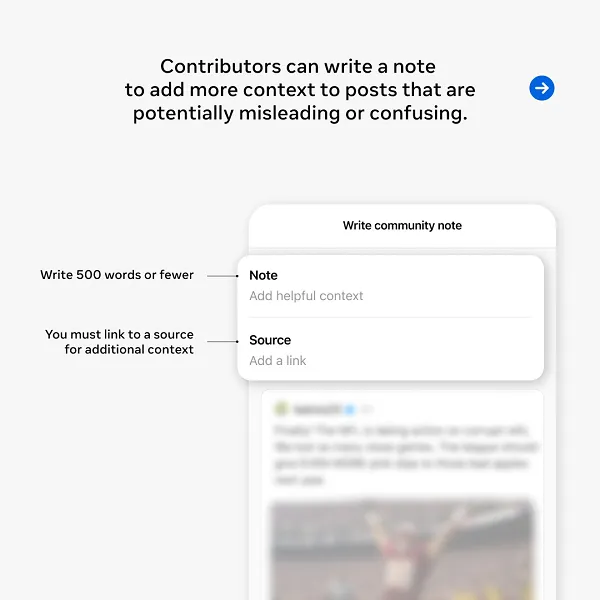

Contributors who are approved for Community Notes will have the opportunity to elaborate on their concerns, using up to 500 characters to clarify their viewpoints. Additionally, they are mandated to provide a reference link to support their claims, ensuring that users have access to context and further information regarding the content in question.

To enhance understanding, Meta has developed a dedicated mini-site that outlines this new approach to content moderation and misinformation management within its ecosystem.

However, a critical element missing from Meta’s overview is the challenge that has rendered Community Notes on X less effective in combating misinformation. Specifically, the requirement for users with opposing political views to reach a consensus on whether a note is needed poses significant barriers to the dissemination of vital information.

In its initial explanation of Community Notes, Meta stated:

“Just like they do on X, Community Notes will require agreement between people with a range of perspectives to help prevent biased ratings.”

The intention behind this requirement is to mitigate any potential bias by ensuring that diverse viewpoints are represented in the evaluation process, theoretically promoting a more balanced assessment of content.

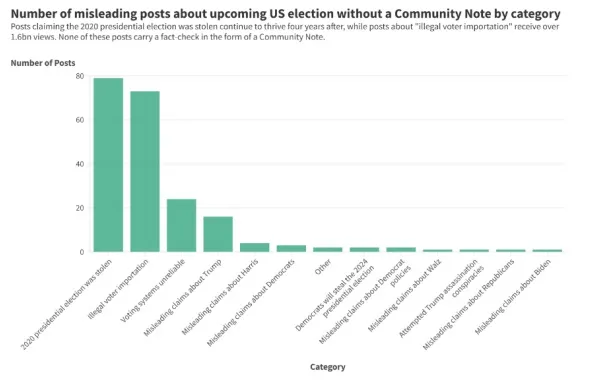

Nevertheless, research concerning the efficacy of Community Notes on X has revealed that many notes go unseen by users, even when they address clear instances of misinformation. This suggests that the mechanism intended to foster agreement may inadvertently stifle valuable discourse.

According to an analysis conducted by the Center for Countering Digital Hate (CCDH), an astounding 73% of Community Notes related to political issues remain undisplayed, despite offering critical context that could inform users about misleading claims.

This data underscores the topics that are most likely to encounter challenges in securing a consensus, and it’s hardly surprising that contentious issues like election interference struggle to find agreement among individuals with differing ideological beliefs.

In the current climate, where even the President may amplify misleading or outright false information, the implications of this system could be dire, as it risks removing any safeguards that might restrict the spread of such claims.

Given Facebook’s expansive reach, this potential issue could be more pronounced compared to the challenges faced on X.

Moreover, there is a lack of clarity regarding how Meta will assess the political affiliations of contributors.

On X, the determination of a contributor’s perspective is based on their past ratings of notes, with the principle that those who consistently rate similarly likely share analogous viewpoints. X also identifies political leanings by analyzing user engagement patterns, such as follows, likes, and shares, which collectively help ascertain an individual’s ideological stance.

Conversely, Facebook already possesses insight into users’ political orientations, as evidenced in the “Ad Preferences” section of the “Privacy Center.” This existing data hints that Meta has tools to evaluate political leanings effectively, but transparency around this process would enhance understanding and address potential shortcomings in the Community Notes framework.

It is also essential to recognize that Community Notes on X has faced challenges from organized groups that collaborate to manipulate the voting process on notes, aligning their support or opposition based on shared political ideologies.

Even Elon Musk has acknowledged that Community Notes is susceptible to manipulation by state-affiliated actors, admitting in a recent post that the platform is being “gamed” by external entities. This raises significant concerns about the integrity of the Community Notes process and its ability to effectively combat misinformation.

Despite these challenges, Meta has chosen not to include this critical context in its overview of the Community Notes initiative, which is being touted as a cutting-edge solution for identifying and limiting misinformation across its platforms that collectively engage over 3.3 billion users each month.

This staggering figure represents more than one-third of the global population, highlighting the immense scale and potential impact of Meta’s new approach.

Nevertheless, the optimism surrounding Community Notes may be misplaced. It remains to be seen whether this initiative will effectively address the pressing issue of misinformation on such a vast scale.

Meta indicates that the Community Notes feature will be gradually implemented over the upcoming months.