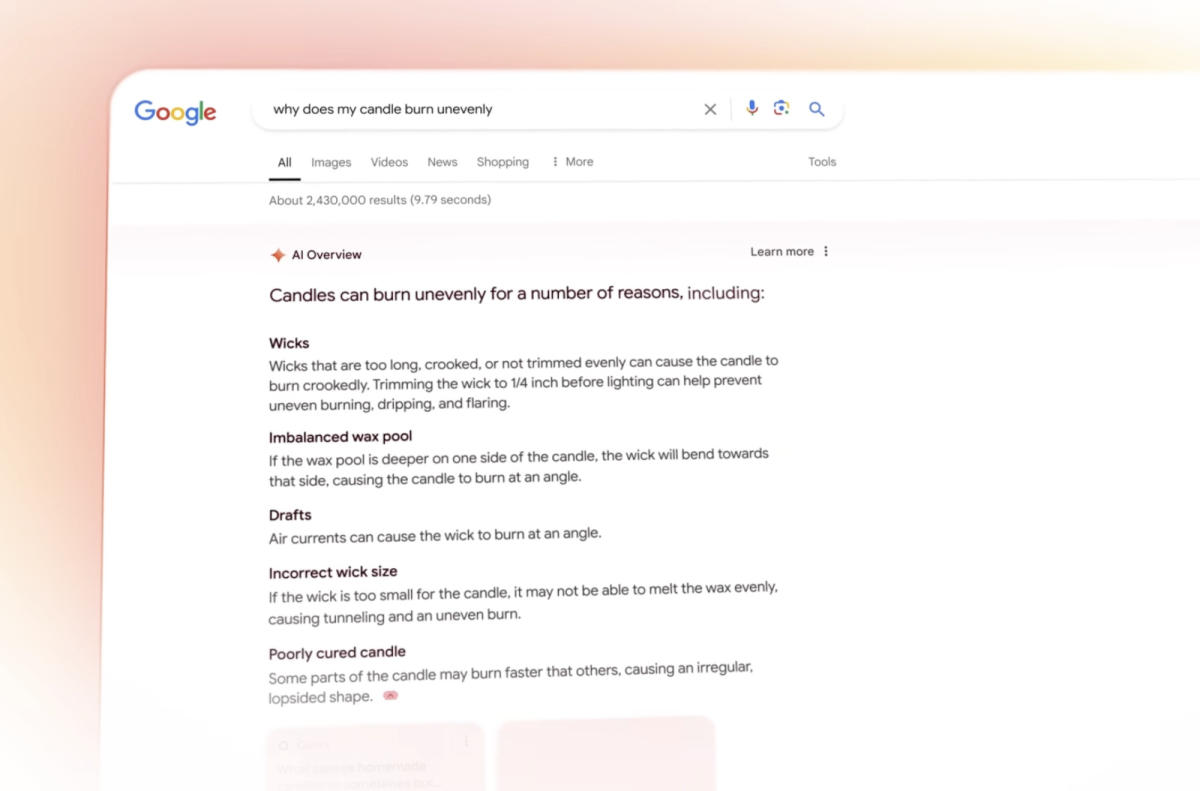

Liz Reid, the Head of Google Lookup, has admitted that the firm’s appear for motor has returned some “odd, inaccurate or unhelpful AI Overviews” right away right after they rolled out to every person in the US. The govt posted an clarification for Google’s additional peculiar AI-generated responses in a weblog internet site short article, exactly where it also introduced that the business enterprise has executed safeguards that will assistance the new aspect return additional correct and significantly much less meme-deserving added benefits.

Reid defended Google and pointed out that some of the additional egregious AI Overview responses most likely about, this type of as promises that it really is protected to go away canines in automobiles, are pretend. The viral screenshot demonstrating the answer to “How very a handful of rocks require to I attempt to consume?” is genuine, but she mentioned that Google came up with an response basically mainly because a internet site revealed a satirical material tackling the subject. “Prior to these screenshots most likely viral, practically no a particular person requested Google that concern,” she described, so the company’s AI connected to that web site.

The Google VP also confirmed that AI Overview explained to people today right now to use glue to get cheese to stick to pizza dependent on information and facts taken from a discussion board. She reported discussion boards commonly present “genuine, to begin with-hand information,” but they could also lead to “much less-than-handy recommendations.” The government did not point out the other viral AI Overview answers heading all about, but as The Washington Publish testimonials, the technologies also instructed buyers that Barack Obama was Muslim and that people ought to drink loads of urine to allow them move a kidney stone.

Reid reported the organization analyzed the element completely in advance of begin, but “there is pretty tiny rather like acquiring hundreds of thousands of people today right now applying the function with very a handful of novel searches.” Google was seemingly in a position to figure out styles whereby its AI engineering did not get things appropriate by wanting at examples of its responses about the prior pair of months. It has then spot protections in place mostly primarily based on its observations, beginning up by tweaking its AI to be capable to far greater detect humor and satire material. It has also up-to-date its devices to limit the addition of particular person-generated replies in Overviews, this type of as social media and discussion board posts, which could give people today misleading or even unsafe ideas. In addition, it has also “additional triggering limits for queries precisely exactly where AI Overviews have been becoming not proving to be as handy” and has stopped exhibiting AI-produced replies for certain well being and fitness matters.