Meta is enhancing its teen safety features by integrating them into Instagram accounts that showcase children, even when these accounts are managed by adults. Although children under the age of 13 are prohibited from creating their own accounts on the popular social media platform, Meta permits adults, such as parents or guardians, to operate accounts on behalf of children and share videos and photos of them. The company acknowledges that these accounts are “overwhelmingly used in benign ways,” yet they remain vulnerable to predators who often leave sexual comments and solicit explicit images through direct messages.

In the upcoming months, Meta is set to provide these adult-managed child accounts with its most stringent message settings to mitigate the risk of inappropriate direct messages. Additionally, the company will automatically activate Hidden Words for these accounts, allowing account holders to filter and eliminate unwanted comments on their posts effectively. Meta will also refrain from recommending these accounts to users who have been blocked by teen users, thereby reducing the likelihood of predators discovering these accounts. Furthermore, the company will implement measures to complicate search visibility for suspicious users and will conceal comments from potentially dubious adults on their posts. Meta emphasizes its commitment to “take aggressive action” against accounts that violate its policies, having already removed 135,000 Instagram accounts for posting sexual comments or requesting explicit images from adult-managed accounts featuring children earlier this year. The company also deleted an additional 500,000 accounts on Facebook and Instagram linked to these offending accounts.

Last year, Meta introduced teen accounts on Instagram, automatically opting users aged 13 to 18 into more robust privacy features. Following this, the company launched teen accounts on Facebook and Messenger in April and is currently testing AI age-detection technology to verify the ages of users who may have misrepresented their birth dates, thereby transitioning them to appropriate teen accounts as necessary.

Since this initiative, Meta has progressively rolled out additional safety features tailored for younger teens. In June, the company released the Location Notice, aimed at informing younger teens when they are communicating with someone located in another country, particularly as sextortion scammers often misrepresent their whereabouts. It’s important to note that authorities have reported a significant rise in sextortion cases, where children are coerced online into sending explicit images. Additionally, Meta has introduced a nudity protection feature that blurs images in direct messages detected to contain nudity, as sextortion scammers often utilize nude images to manipulate victims into reciprocating.

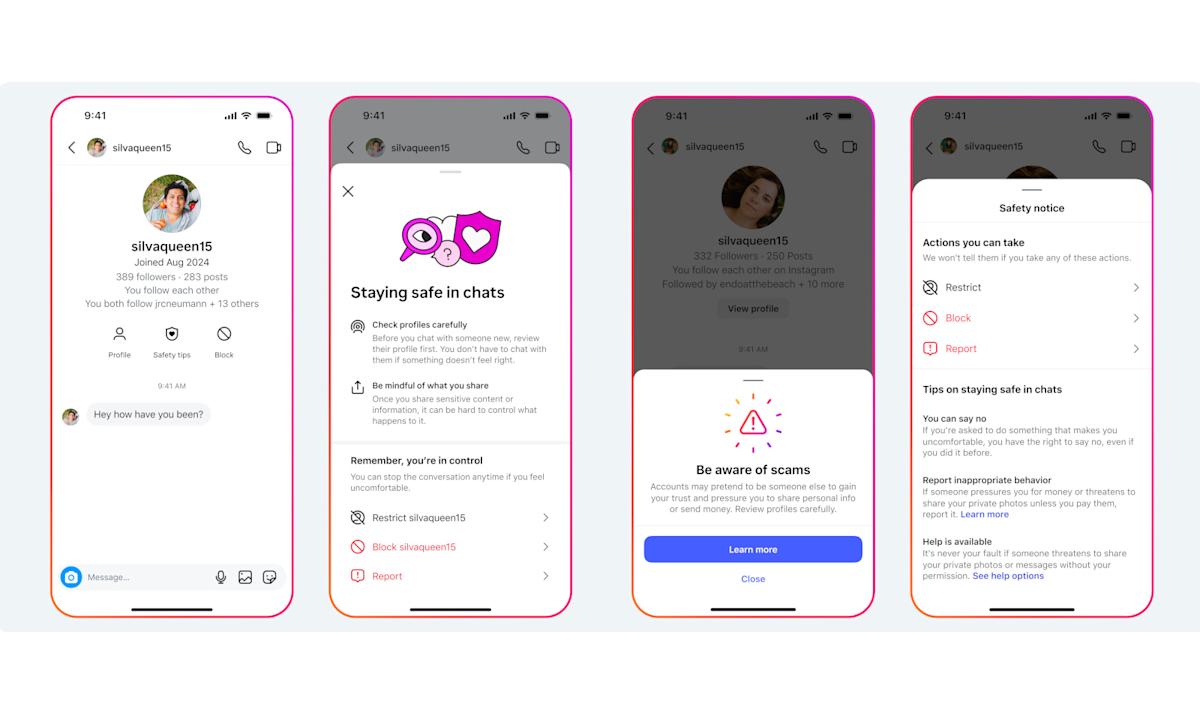

Today, Meta is also unveiling new methods for teens to access safety tips. While engaging in direct messages, they can now click on the “Safety Tips” icon located at the top of their conversation screen, which directs them to options where they can restrict, block, or report the other user. Moreover, Meta has introduced a combined block and report feature in direct messages, enabling users to execute both actions simultaneously with a single tap.