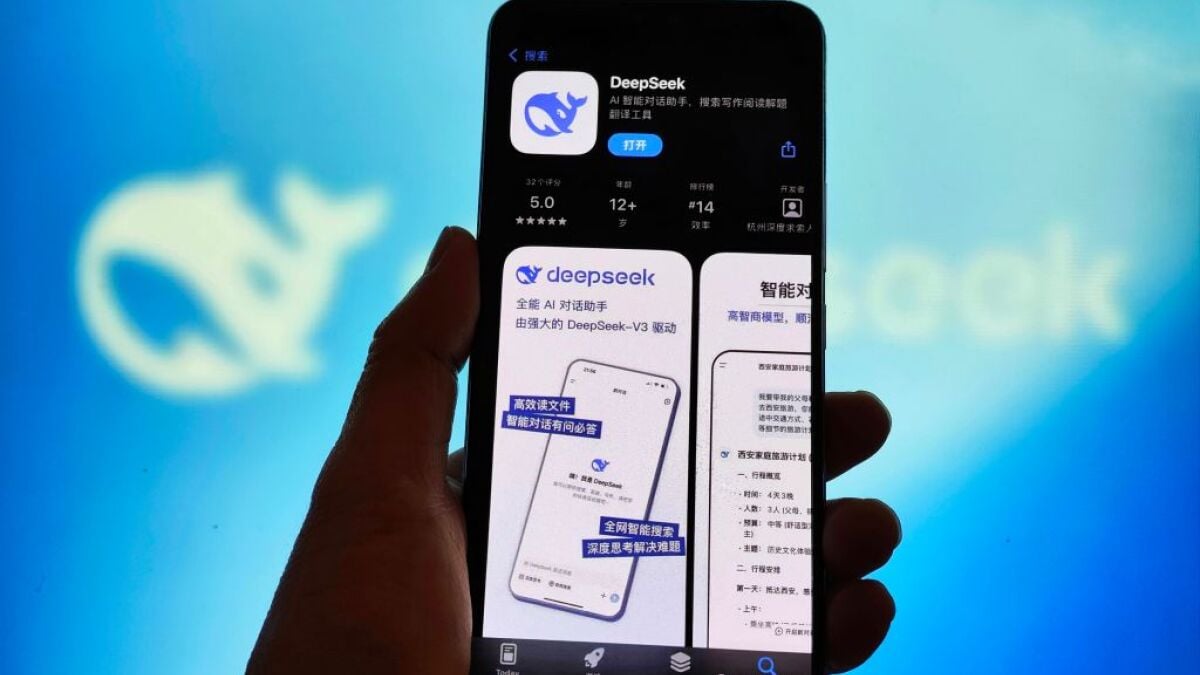

DeepSeek is making headlines everywhere, capturing the attention of both tech enthusiasts and investors alike.

The company’s R1 model is open source and has been trained at a fraction of the cost compared to other cutting-edge AI models, reportedly achieving performance levels that rival, or even surpass, those of ChatGPT.

This remarkable achievement has sent shockwaves through Wall Street, resulting in a significant decline in tech stocks and prompting investors to reconsider the financial outlay necessary for developing competitive AI models. According to the engineers at DeepSeek, R1 was trained using 2,788 GPUs, amounting to about $6 million, starkly contrasting with the estimated $100 million spent on training OpenAI’s GPT-4.

DeepSeek’s impressive cost efficiency raises questions about the prevailing notion that larger models and more extensive datasets are essential for superior AI performance. As discussions swirl around the implications of DeepSeek’s capabilities and its potential threat to established AI companies like OpenAI, industry experts have stepped forward to provide their insights and analyses, shedding light on this disruptive development.

Understanding the Paradigm Shift: Why Bigger AI Models Aren’t Always Better

Amid trade restrictions and difficulties accessing Nvidia GPUs, the China-based DeepSeek team had to innovate to develop and train their R1 model. The achievement of creating such a powerful model for a mere $6 million is indeed a revelation that has caught the attention of investors and industry watchers alike.

However, seasoned AI experts were not caught off guard. Timnit Gebru, a prominent figure in AI ethics who was dismissed from Google for raising concerns about AI bias, shared her experience on X, questioning the industry’s obsession with constructing the largest models. She reflected on her time at Google, where her inquiries about the rationale behind this fixation were met with dismissal. Her insights underscore the need for a paradigm shift in how AI models are evaluated, emphasizing functionality and efficiency over size.

Mashable Light Speed

Sasha Luccioni, the climate and AI lead at Hugging Face, pointed out that the current AI investment landscape often relies more on marketing hype than on technical merit. She expressed her disbelief that merely suggesting a high-performing LLM can achieve remarkable results without an exorbitant number of GPUs is enough to create such a stir in the industry.

Decoding the Impact of DeepSeek R1 on the AI Landscape

DeepSeek’s R1 has demonstrated performance levels that are comparable to OpenAI’s o1 model across various key benchmarks. In tests assessing math skills, coding proficiency, and general knowledge, R1 either slightly surpassed, matched, or fell just short of the performance demonstrated by o1. This indicates that there are numerous other models, including Anthropic’s Claude, Google’s Gemini, and Meta’s open-source Llama, that can deliver similar capabilities to users.

The frenzy surrounding R1 is largely attributed to its extremely low development cost. AI research scientist Gary Marcus noted, “It’s not smarter than earlier models, just trained more cheaply,” highlighting the model’s cost efficiency rather than its intelligence or capabilities.

The fact that DeepSeek has successfully developed a model that can compete with OpenAI’s offerings is a significant achievement in itself. Andrej Karpathy, a co-founder of OpenAI, commented on X, stating that while large GPU clusters are still necessary for frontier LLMs, there is much to learn about optimizing data and algorithms. He emphasized that R1 serves as a valuable demonstration of how efficiency can be achieved in AI development.

Wharton AI professor Ethan Mollick emphasized that the focus should not solely be on the capabilities of DeepSeek R1 but rather on the models that are currently accessible to users. While he acknowledged that DeepSeek has created a highly competent model, he remarked that it does not inherently outperform models like o1 or Claude. However, he believes that the attention and accessibility of R1 are exposing a broader audience to the advanced capabilities of AI, particularly those who have previously relied on less powerful “mini” models.

Celebrating the Triumph of Open Source AI Models in the Tech Arena

The impressive emergence of DeepSeek R1 marks a significant victory for the open source AI community, which advocates for democratizing access to powerful AI models. This approach not only fosters transparency and innovation but also encourages healthy competition within the industry. Yann LeCun, the chief AI scientist at Meta, remarked, “For those who think ‘China is surpassing the U.S. in AI,’ the reality is that ‘open source models are surpassing closed ones,’” highlighting the importance of open-source developments in the ongoing AI race.

AI expert Andrew Ng did not explicitly address the significance of R1 being open source but emphasized that the disruption caused by DeepSeek is beneficial for developers. He pointed out that it provides access to advanced capabilities that are often restricted by major tech companies. Ng stated, “Today’s ‘DeepSeek selloff’ in the stock market — attributed to DeepSeek V3/R1 disrupting the tech ecosystem — is another sign that the application layer is a great place to be,” reinforcing that a hyper-competitive foundation model layer is advantageous for those creating applications.

Topics

Artificial Intelligence

DeepSeek

var facebookPixelLoaded = false;

window.addEventListener(‘load’, function(){

document.addEventListener(‘scroll’, facebookPixelScript);

document.addEventListener(‘mousemove’, facebookPixelScript);

})

function facebookPixelScript() {

if (!facebookPixelLoaded) {

facebookPixelLoaded = true;

document.removeEventListener(‘scroll’, facebookPixelScript);

document.removeEventListener(‘mousemove’, facebookPixelScript);

!function(f,b,e,v,n,t,s){if(f.fbq)return;n=f.fbq=function(){n.callMethod?

n.callMethod.apply(n,arguments):n.queue.push(arguments)};if(!f._fbq)f._fbq=n;

n.push=n;n.loaded=!0;n.version=’2.0′;n.queue=[];t=b.createElement(e);t.async=!0;

t.src=v;s=b.getElementsByTagName(e)[0];s.parentNode.insertBefore(t,s)}(window,

document,’script’,’//connect.facebook.net/en_US/fbevents.js’);

fbq(‘init’, ‘1453039084979896’);

fbq(‘track’, “PageView”);

}

}